Learn the latest

AI Skills

Learn the latest

AI Skills

- Hands-on, in-depth, project-based

- Taught by an instructor with significant teaching experience

- Complete a generative AI capstone project using text, code, and image generation tools

- Develop a confident, deep understanding of training and stochastic gradient descent

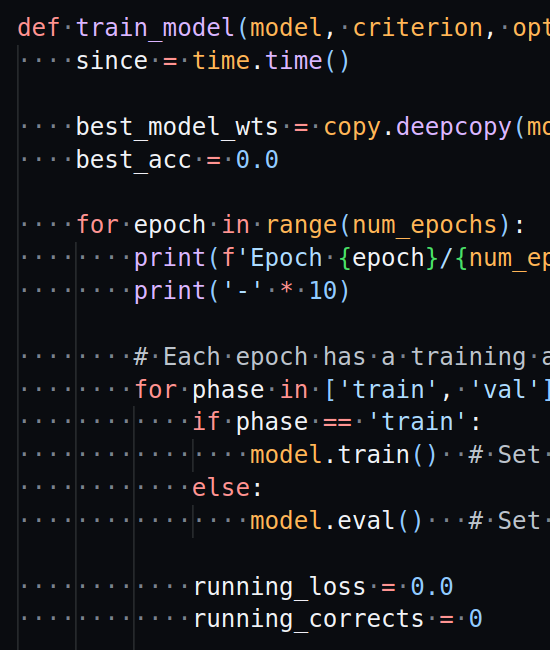

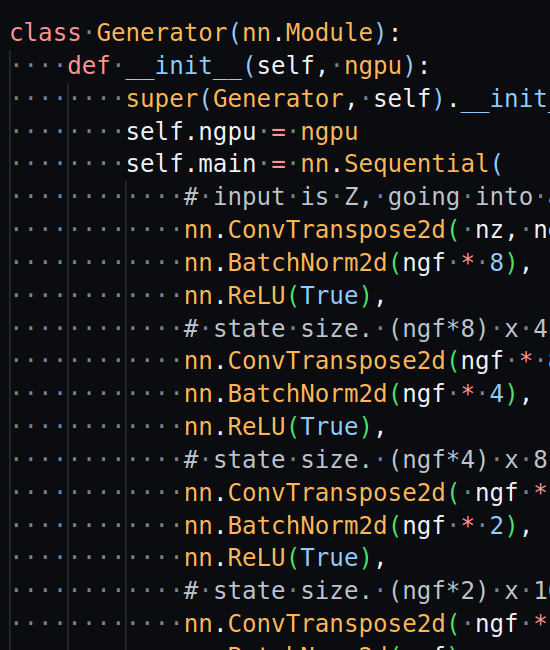

- Hands-on experience writing training loops with PyTorch

- In-depth treatment of major neural net architectures and techniques currently being used

- Develop a high level mental model of how ML hardware influences neural net design

- Hands-on experience using in-context learning and orchestration to build on existing LLMs

- Overview of the current research that will extend LLMs even further

Basics: - Basics of Neural Networks - Training Deep Neural Networks using Stochastic Gradient Descent - Using PyTorch - Convolutional Neural Networks - Embeddings - Recurrent Neural Networks - ML Hardware Core: - Transformer Architecture (heart of an LLM) - Generative Adversarial Networks and Autoencoders - Stable Diffusion - Reinforcement Learning - Transfer Learning and Fine Tuning - Few-Shot and Zero-Shot Learning - Training with Data and Model Parallelism - Prompt Engineering - Orchestration Current State of the Art: - What comes after Self-Attention? - Multi-Modal and Augmented Transformers - Autonomous Agents - Intelligence? (discussion)

Looking for something more advanced? Advanced AI: LLM Research

Or something totally unique (and not AI related)? HW/SW Codesign: Forth on Custom Silicon

Use the latest generative AI tools to work on a project using a combination of text, code, and image generation. We'll use project based learning to better understand what's currently possible and how generative AI can be used.

Dive deep into the concepts behind LLMs. This course is perfect for anyone who wants to go under the hood and understand why the Transformer Architecture and Reinforcement Learning when applied together are so powerful.

We will roll up our sleeves and write Python code using PyTorch to describe every detail of deep learning models and use orchestration tools like LangChain and DSPy to explore the latest techniques that layer on top of LLMs.

By the end of the course you will have trained models with various architectures and used multiple generative AI techniques. I'll also go over the basic concepts multiple times to build confidence in your understanding of the training process.

We'll go over ML hardware early on in the course so that it makes sense why these particular architectures work so well. You'll also get a glimpse into what's next after standalone LLMs.

The course has been refined with the help of expert ML researchers and practicing software engineers to focus on the most important AI concepts that are relevant today.

- Setup your local ML development environment - Use PyTorch, numpy, and other python libraries (matplotlib, xgboost, shap, etc.) - Explore pre-trained models and public datasets - Train small models from scratch to develop a confidence in understanding stochastic gradient descent - Learn production ready natural language processing and machine vision techniques - Use transfer learning to quickly adapt an existing model to your needs - Practice hyperparameter tuning

- Learn how Reinforcement Learning (RL), Generative Adversarial Networks (GAN), and Autoencoders can be used in practice - Design and run a small ML research experiment - Use cloud GPUs to train a larger network in parallel - Use a combination of in-context learning, fine-tuning, and RLHF to create efficient LLM based solutions to tasks - Use orchestration to "close the loop" on LLMs and super-charge your productivity

There are no hard prerequisites, but some experience with Python and a general STEM background is helpful.

Machine learning is a broad discipline that builds on everything from statistics and linear algebra to coding and electrical engineering. Current AI techniques draw from an assortment of concepts from each with a special focus on specific topics from statistics, linear algebra, and multivariable calculus.

If you're familiar with concepts like regression, entropy, linear superposition, and dot product, you'll be fine. And if not, we are going to cover those topics in detail.

When it comes to writing code for ML, I'll cover every aspect of Python we need for the course. The ML scripts we will write tend to look similar to each other and are much simpler than traditional software engineering.

It's an intense course that requires more time outside of class for self-study if you don't already have the equivalent of an engineering or science degree, but I work hard to find the best way to explain the core concepts and spend significant time finding high quality supporting material to fill in any gaps.

I have created a short diagnostic to help gauge your preparedness for the course.

If you have any questions about the course, please do not hesitate to contact me.

Other Courses - Advanced AI: LLM Research and HW/SW Codesign: Forth on Custom Silicon

" I was lucky to find Steve's Semi Engineering course; it was a blast. As a software person, I've always been interested in hardware. However, I never dreamed of getting started with Chip/VLSI design and eventually going through the tape-out process.

Steve is a great mentor, constantly gauging the students' understanding and adjusting his teaching style accordingly. The format combines hands-on sessions and lectures with prompt feedback, greatly accelerating the learning process.

For adult learners, Steve has a structured teaching style, dividing core concepts into chapters. Learning new skills in a short amount of time after work can be overwhelming, but he lays out the foundations and provides an overview at an appropriate abstraction level, helping students develop mental models early on.

The Open Source design flow we rely on is still in its early stages, with mostly community-maintained toolchains that originated from academia or individual efforts, which can make them a bit rough around the edges. A significant part of our capstone project involves learning to use these toolchains for design. It's easy to feel lost when navigating multiple codebases while still grasping the hierarchy of concepts, but Steve is there to help me overcome those challenges.

Steve is an avid learner who is always building and experimenting. Whether you're a beginner starting to code or an experienced professional, I highly recommend him for your next learning project."

- Ian

" I had the privilege of being a student in Steve Goldsmith's 1-on-1 Full Stack Web Development Cohort at Aurifex Labs, founded by Steve himself, and I can confidently say that it was a transformative learning experience. Steve's approach to teaching is fully project-based, ensuring that we not only learn the concepts but also apply them in projects.

One of the most impressive aspects of Steve's teaching style is his commitment to addressing every single doubt. He patiently explains each concept until it is crystal clear, never rushing to go fast. The sessions are not bound by a strict time limit, instead Steve ensures that all doubts are resolved before concluding, even if it means extending the session.

Steve, as the founder of Aurifex Labs, provides a supportive and nurturing environment for learning. His expertise in web development is evident in every lesson, and his passion for teaching is truly inspiring. I wholeheartedly recommend Steve Goldsmith's Full Stack Web Development Cohort at Aurifex Labs to anyone looking to elevate their skills and unlock their potential in the world of web development 1-1."

- Murali

" I was fortunate to find Steve’s one on one web development course at Aurifex Lab . Frankly, it was the best learning experience that could have happened to me. The course was highly structured and focused on programming core concepts and principles i.e data types, loops, objects, arrays, D.R.Y(Don’t repeat yourself) etc..

I’ve always been very curious about coding as I had worked in Quality Assurance testing with software engineers, but I had always thought it was out of my reach or too “difficult to learn”. I joined coding bootcamps but their teaching style was too fast paced and didn’t properly explain the key concepts that were important to understand in computer science. Steve really eased those doubts.

He starts you off with simple fun projects! (space invaders) and makes you really think about what you're coding instead of just telling you what to type. He provides you live feedback on what is going on in the back-end of the program and challenges you logically to think of the next step. Even if you do get stuck, Steve is extremely patient to help you process all of the information being thrown at you.

If you’re curious about learning web development, AI, data structure/algorithms or even looking for a change of career, then Steve at Aurifiex Labs is definitely the place for you to harness the skills needed to be adequately prepared for the job market. I can’t recommend his web development course enough. All you have to do is take the plunge of courage to tackle head on those doubts and Steve can lead you there.

”We suffer more in our imagination than in reality.” - Seneca "

- Eric

semiengineering.com (Semiconductor)

Grokking Deep Learning, Trask, Andrew

Hands-On Machine Learning with Scikit-Learn, Keras, and TensorFlow: Concepts, Tools, and Techniques to Build Intelligent Systems, Géron, Aurélien

Clean Architecture: A Craftsman's Guide to Software Structure and Design, Martin, Robert

CMOS VLSI Design: A Circuits and Systems Perspective (4th Edition), Weste, Neil; Harris, David

Formal Verification: An Essential Toolkit for Modern VLSI Design, Erik Seligman, et al.

Computer Architecture: A Quantitative Approach, Hennessy, John; Patterson, David

Security Engineering: A Guide to Building Dependable Distributed Systems, Anderson, Ross

Crafting Interpreters, Nystrom, Robert

SKY130 - Skywater 130nm PDK

GF180MCU - GlobalFoundries 180nm PDK